The reason why football is so exciting is uncertainty. The outcome of any match or league is unknown, and you get to watch the action unfold without knowing what’s going to happen. Watching matches where you know the score is never exciting.

This weekend the English Premier League season will conclude with little fanfare. Bar one relegation place, the league positions have already been determined. In fact, these positions were, for the most part, decided weeks ago. The element of uncertainty seems to have been reduced this season.

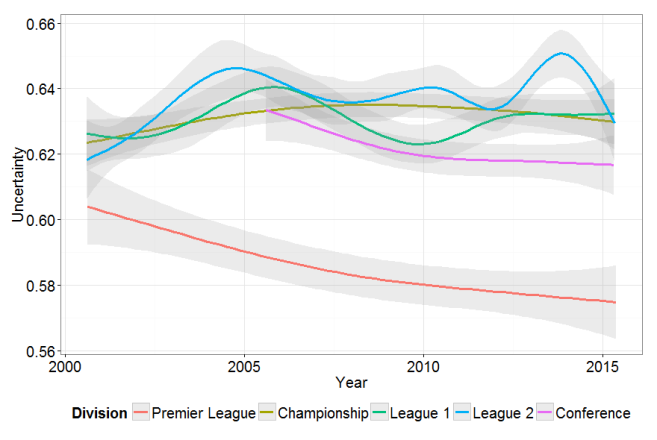

With this in mind, I wanted to look at uncertainty over the long run in English football. To do this used the data provided by http://www.football-data.co.uk/ and analyzed these with R. These data consist of 34,740 matches played in the top 5 divisions of English football between 2000 and 2015, containing information about both the result and the odds offered by bookies on this result.

To measure the uncertainty of any given match I used the following strategy. First, I averaged across all bookies’ odds for the three possible events: home win, draw, and away win. Next I mapped these aggregated odds into probabilities by inverting each of the odds and then dividing by the summed inverted odds. This takes care of the over round that helps bookies to make a profit. For example, if the odds were 2.1/1 that an event happens and 2.1/1 that it doesn’t then the probability of the event occurring is:

(1/2.1)/ (1/2.1 + (1/2.1)) = 0.4761905/(0.4761905+0.4761905) = 0.5.

Finally, to measure the uncertainty of each match, I subtract the probability that the event occurred from 1, to calculate a “residual” score. Imagine a home win occurs. The “residual” in this case will be 1-P(home win). If P(home win)=1, then there is no uncertainty, and this uncertainty score will be zero. Since there are 3 outcomes, we would expect an uncertainty measure to be bounded between 0 (no uncertainty) and 0.67 (pure uncertainty) where we get 1 out of 3right by just guessing.

After importing these data into R and calculating the uncertainty measure, I looked at this uncertainty measure over time. The plot in the above shows fitted smoothed trend lines of uncertainty, stratified by division. These trends are striking. Going by this graph, the Premier League has gotten more predictable over the analysis period. In 2000, the uncertainty measure was around 0.605. Given that we expect this measure to be bound between 0 (complete certainty) and 0.67 (completely random), this tell us that the average league game was very unpredictable. Over time, however, this measure has decreased by about 5%, which does not seem like much. Despite, the somewhat unexciting end to the 2014/15 season, the outcome of the average game is still not very predictable.

Noticeably, in lower league games there is even greater uncertainty. In fact, the average uncertainty measure of League 2 games approached a value of 0.65 in 2014. This indicates that the average League 2 game is about as unpredictable as playing rock-paper-scissors. Interestingly, and unlike the Premier League, there does not appear to be any discernible change over time. The games are just as unpredictable now as they were in 2000. Please see my R code below.

# clear

rm(list=ls())

# libraries

library(ggplot2)

# what are urls

years = c(rep("0001",4), rep("0102",4), rep("0203",4), rep("0405",4),

rep("0506",5), rep("0607",5), rep("0708",5), rep("0809",5),

rep("0910",5), rep("1011",5), rep("1112",5), rep("1213",5),

rep("1314",5), rep("1415",5))

divis = c(rep(c("E0","E1","E2","E3"),4), rep(c("E0","E1","E2","E3","EC"),10))

urls = paste(years, divis, sep="/")

urls = paste("http://www.football-data.co.uk/mmz4281", urls, sep="/")

odds = c("B365H","B365D","B365A",

"BSH","BSD","BSA",

"BWH","BWD","BWA",

"GBH","GBD","GBA",

"IWH","IWD","IWA",

"LBH","LBD","LBA",

"PSH","PSD","PSA",

"SOH","SOD","SOA",

"SBH","SBD","SBA",

"SJH","SJD","SJA",

"SYH","SYD","SYA",

"VCH","VCD","VCA",

"WHH","WHD","WHA")

home = odds[seq(1,length(odds),3)]

draw = odds[seq(2,length(odds),3)]

away = odds[seq(3,length(odds),3)]

# load all data in a loop

full.data = NULL

for(i in 1:length(urls)){

temp = read.csv(urls[i])

# calculate average odds

temp$homeodds = apply(temp[,names(temp) %in% home], 1, function(x) mean(x,na.rm=T))

temp$drawodds = apply(temp[,names(temp) %in% draw], 1, function(x) mean(x,na.rm=T))

temp$awayodds = apply(temp[,names(temp) %in% away], 1, function(x) mean(x,na.rm=T))

temp = temp[,c("Div","Date","FTHG","FTAG","FTR","homeodds","drawodds","awayodds")]

full.data = rbind(full.data, temp)

}

full.data$homewin = ifelse(full.data$FTR=="H", 1, 0)

full.data$draw = ifelse(full.data$FTR=="D", 1, 0)

full.data$awaywin = ifelse(full.data$FTR=="A", 1, 0)

# convert to probs with overrind

full.data$homeprob = (1/full.data$homeodds)/(1/full.data$homeodds+1/full.data$drawodds+1/full.data$awayodds)

full.data$drawprob = (1/full.data$drawodds)/(1/full.data$homeodds+1/full.data$drawodds+1/full.data$awayodds)

full.data$awayprob = (1/full.data$awayodds)/(1/full.data$homeodds+1/full.data$drawodds+1/full.data$awayodds)

# bookie residual

full.data$bookieres = 1-full.data$homeprob

full.data$bookieres[full.data$FTR=="D"] = 1-full.data$drawprob[full.data$FTR=="D"]

full.data$bookieres[full.data$FTR=="A"] = 1-full.data$awayprob[full.data$FTR=="A"]

# now plot over time

full.data$time = ifelse(nchar(as.character(full.data$Date))==8,

as.Date(full.data$Date,format='%d/%m/%y'),

as.Date(full.data$Date,format='%d/%m/%Y'))

full.data$date = as.Date(full.data$time, origin = "1970-01-01")

full.data$Division = "Premier League"

full.data$Division[full.data$Div=="E1"] = "Championship"

full.data$Division[full.data$Div=="E2"] = "League 1"

full.data$Division[full.data$Div=="E3"] = "League 2"

full.data$Division[full.data$Div=="EC"] = "Conference"

full.data$Division = factor(full.data$Division, levels = c("Premier League", "Championship", "League 1",

"League 2","Conference"))

ggplot(full.data, aes(date, bookieres, colour=Division)) +

stat_smooth(size = 1.25, alpha = 0.2) +

labs(x = "Year", y = "Uncertainty") +

theme_bw() +

theme(legend.position="bottom") +

theme(axis.text=element_text(size=20),

axis.title=element_text(size=20),

legend.title = element_text(size=20),

legend.text = element_text(size=20))

Interesting stuff. Surprised be the predictability of premier league versus the others. Perhaps down to more money and effort spent in understanding the teams and their behavior and that is reflected into the odds?

Could be applied to other sports – F1 would be interesting.

Yes, this could be applied to F1. Don’t know where to get the data from however.

Super interesting, but how do you know that what you’re capturing isn’t just bookies setting more precise odds for the PL? I would imagine that’s where most of their profit comes from, so it makes sense for them to invest most in getting those odds correct.

Thanks for an interesting blog!

Yes, I see what you’re saying. The games are just as unpredictable, but the bookies know more. That is possible of course. Thanks for the comment.